*This content was translated by AI.

What if a famous person you usually trust talks to SNS and recommends investment.

The voice on the phone must be my daughter, what if it's a crafted fake? With the development of AI, the boundary between real and fake is disappearing. Deepfake technology that takes just a few seconds of voice and a single photo to complete. With this skill, anyone can impersonate a celebrity.

Classic crimes such as celebrity impersonation advertising, voice phishing, and romance scams are evolving more deftly with the wings of AI. Can we tell what's real in front of a 'real fake'. What should we believe and doubt now. "Tracking 60 Minutes" covered the crime situation that has dug deep into our daily lives in the era of "AI Great Transformation."

■ Technology to Design Trust, Deepfake

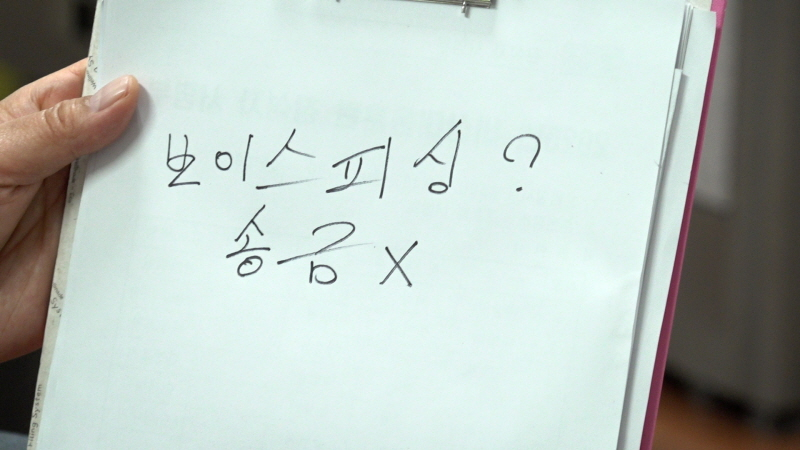

In April last year, Lee Hye-ran (pseudonym), who worked at a subway store in Seoul, received a phone call. The man who called demanded 10 million won in cash, saying, "I have my daughter with me." The voice phishing man who pressed Hyeran by listening to his daughter's crying voice. However, he was able to prevent the transfer of money to a station employee's base, who overheard Lee's phone call. Fortunately, voice phishing was avoided, but questions remained. How on earth did they embody their daughter's voice so equally?

Experts say that if you have a voice of about 10 seconds, you can easily replicate your voice through AI technology. The voice in the short video that was inadvertently posted on social media can also be used for crime.

AI deepfake that can replicate not only the voice of a real person but also the face. These techniques are also used for romance scams. Nam Sang-hee (pseudonym) got to know a man through SNS in 2024. The man continuously chatted and made video calls to build a friendship with Nam and introduced a famous investment expert. I trusted an investment expert and invested 200 million won, but two months later, my withdrawal was blocked and I lost all my money. As it turned out, all the faces encountered in video calls and lecture videos were 'fake' faces using deepfake technology.

■ Deepfake sex crimes that hit everyday life

As deepfake technology has become commonplace, the number of deepfake sex crimes is also increasing significantly. Jeong Ju-yeon (pseudonym) found dozens of naked female photos on her ex-lover's phone. It's my first time seeing a picture, but I was used to it. The face in the picture was none other than Jeong himself. Choi produced nude photos using the faces of more than 40 women, including Jeong's acquaintances, influencer, and celebrities, in addition to her girlfriend, Jeong. The deepfake site Choi used was able to create hundreds or thousands of nude photos with just one photo.

Deepfake photos can be easily made and distributed by anyone, so they have spread like a trend among teenagers. In 2024, a high school student in the Incheon area produced a deepfake sex crime against teachers at the middle school he graduated from. The teacher's photo from the graduation album was deepfaked and personal information was written down and distributed on Telegram. The investigation identified the perpetrator and held a disciplinary committee, but the disciplinary action was canceled when the student suddenly chose to drop out. Teachers say deepfake sex crime punishment must be strengthened for a safe educational field.

■ What's wrong with celebrities? Deepfake false advertising

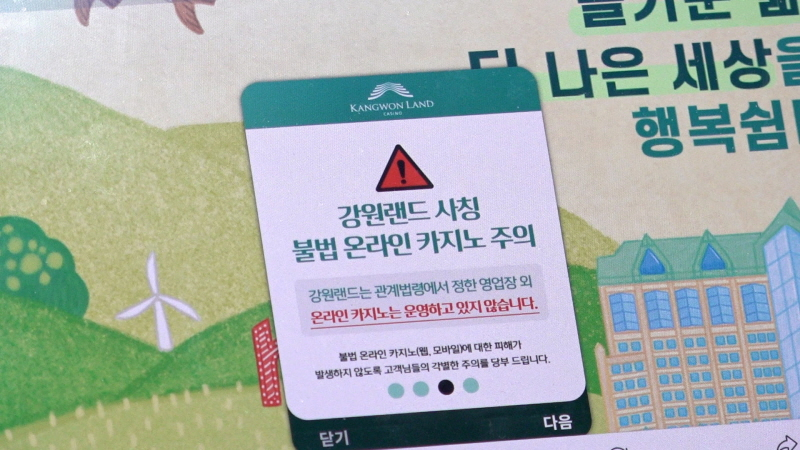

The easiest target of deepfake crime is a celebrity with a known face. In August last year, a video of the national soccer team Son Heung-min promoting an illegal gambling application spread online, saying, "You can enjoy Kangwon Land Casino online." However, Son Heung-min in the video is not a real person, but a 'fake' made with deepfake technology. Yoo Soo-jin, a famous investment expert, is also a victim of deepfake advertising. The video replicating Yoo Soo-jin's face and voice is used to promote illegal investment, which has constantly led to complaints from victims. I tried to report it to prevent advertisement, but I was only told that there was no clear way out.

■ Fast-developing AI technology, what is our current state?

Deepfake technology has developed at a rapid pace, making it sophisticated enough for even experts to stick their tongues out. Now that a few lines of commands make a more authentic video than the real one, how far has technological advancement to prevent the crime of 'fake' come?

A telecommunications company developed and commercialized its own program to prevent deep voice crimes. Soongsil University's AI Security Research Center is focusing on defense technology research to block the risk of deep voice. Even the National Forensic Service, which studies and identifies AI crimes, says it is not easy to follow rapidly developing AI crimes. In the midst of a flood of AI productions that are 'more real than real', experts urge the development of AI literacy to determine the authenticity of the pouring information.

Related crimes increasing day by day with fast-developing AI technology. What should we prepare to stand at the beginning of the new AI world? The 1439th episode of "Tracking 60 Minutes" and "AI, Crime, and the Real Gone" can be found on KBS 1TV at 10 p.m. on the 9th.

<© STARNEWS. All rights reserved. No reproduction or redistribution allowed.>

*This content was translated by AI.

![17th Cycle Sun-ja, Tears Over 20th Cycle Young-sik's Ironclad Wall: "This Is Why I Can't Date" [NaSolSaGye] [ByeolbyeolTV]](https://image.starnewskorea.com/cdn-cgi/image/f=auto,w=271,h=188,fit=cover,g=face/21/2026/05/2026051422431026589_1.jpg)

![25th Cohort Sunja, Uncomfortable with 25th Cohort Youngja: "I Want to Go Home" [NaSolSaGye]](https://image.starnewskorea.com/cdn-cgi/image/f=auto,w=271,h=188,fit=cover,g=face/21/2026/05/2026051422273618270_1.jpg)

!['British Husband♥' Guk Ga-bi, Surprise Update After 7 Months: "Family Has Grown... Many Personal Matters" [Star Issue]](https://image.starnewskorea.com/cdn-cgi/image/f=auto,w=567,h=378,fit=cover,g=face/21/2026/05/2026051417295840105_1.jpg)

![Kim Sang-kyung's Bold "White Hair" Wasn't Dyed: "I Already Had White Hair in My 20s; Living Authentically Is What Matters" [Fifty Percent][Star Issue]](https://image.starnewskorea.com/cdn-cgi/image/f=auto,w=567,h=378,fit=cover,g=face/21/2026/05/2026051411415694701_1.jpg)

![Lee Seung-hwan announces appeal to Gumi Mayor: "No apology of any kind... Do not use tax money" [Full Text]](https://image.starnewskorea.com/cdn-cgi/image/f=auto,w=567,h=378,fit=cover,g=face/21/2026/05/2026051411230553946_1.jpg)

!['Kindergarten Satire' Chaos: Lee Su-ji's 'Hot Issue Ji' Warns of Impersonation Scams, "Confirmed Cases of Meeting Solicitation" [Official][Full Text]](https://image.starnewskorea.com/cdn-cgi/image/f=auto,w=567,h=378,fit=cover,g=face/21/2026/05/2026051411060598426_1.jpg)